Kubernetes (k8s) 集群部署(四) 完整版

第四步

Kubernetes Node

Node 是主要执行容器实例的节点,可视为工作节点。在这步骤我们会下载 Kubernetes binary 文件,并创建 node 的 certificate 来提供给节点注册认证用。Kubernetes 使用Node Authorizer来提供Authorization mode,这种授权模式会替 Kubelet 生成 API request。

- 在开始前,我们先在master1将需要的 ca 与 cert 复制到 Node 节点上:

$ cd ~/kubernetes/server/bin

$ scp kubelet kube-proxy 192.168.184.29:/usr/local/bin/

$ scp kubelet kube-proxy 192.168.184.30:/usr/local/bin/

$ cd /etc/etcd/ssl- 创建角色绑定

$ kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap- 创建 kubelet bootstrap.kubeconfig 文件 设置集群参数

$ kubectl config set-cluster kubernetes --certificate-authority=/etc/etcd/ssl/ca.pem --embed-certs=true --server=https://192.168.184.28:6443 --kubeconfig=bootstrap.kubeconfig- 设置客户端认证参数,token值为之前生成的

$ cat /etc/etcd/ssl/bootstrap-token.csv

$ kubectl config set-credentials kubelet-bootstrap --token=05c645cec943aef73c8b1f54464120c0 --kubeconfig=bootstrap.kubeconfig // --token 之前存的token- 设置上下文参数

$ kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=bootstrap.kubeconfig- 选择默认上下文并向node节点分发在master端生成的bootstrap.kubeconfig文件

$ kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

$ mv bootstrap.kubeconfig /etc/etcd/cfg/

$ scp /etc/etcd/cfg/bootstrap.kubeconfig 192.168.184.29:/etc/etcd/cfg/

$ scp /etc/etcd/cfg/bootstrap.kubeconfig 192.168.184.30:/etc/etcd/cfg/部署kubelet(work01和work02节点操作)

- 设置CNI支持

$ mkdir -p /etc/etcd/cni/net.d //192.168.184.29

$ mkdir -p /etc/etcd/cni/net.d //192.168.184.30

$ cat > /etc/etcd/cni/net.d/10-default.conf <<EOF

{

"name": "flannel",

"type": "flannel",

"delegate": {

"bridge": "docker0",

"isDefaultGateway": true,

"mtu": 1400

}

}

EOF

$ scp -r /etc/etcd/cni/net.d/10-default.conf 192.168.184.26:/etc/etcd/cni/net.d/10-default.conf- 创建kubelet目录和.创建kubelet服务配置

$ mkdir /var/lib/kubelet //192.168.184.29

$ mkdir /var/lib/kubelet //192.168.184.30

$ cat > /usr/lib/systemd/system/kubelet.service <<EOF

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \

--address=192.168.184.29 \

--hostname-override=192.168.184.29 \

--pod-infra-container-image=mirrorgooglecontainers/pause-amd64:3.0 \

--experimental-bootstrap-kubeconfig=/etc/etcd/cfg/bootstrap.kubeconfig \

--kubeconfig=/etc/etcd/cfg/kubelet.kubeconfig \

--cert-dir=/etc/etcd/ssl \

--network-plugin=cni \

--cni-conf-dir=/etc/etcd/cni/net.d \

--cni-bin-dir=/usr/local/bin/cni \

--cluster-dns=10.1.0.2 \

--cluster-domain=cluster.local. \

--hairpin-mode hairpin-veth \

--allow-privileged=true \

--fail-swap-on=false \

--logtostderr=true \

--v=2 \

--logtostderr=false \

--log-dir=/etc/etcd/log

Restart=on-failure

RestartSec=5

EOF

$ scp -r /usr/lib/systemd/system/kubelet.service 192.168.184.30: /usr/lib/systemd/system/kubelet.service- 启动

$ systemctl daemon-reload

$ systemctl enable kubelet.service && systemctl start kubelet.service

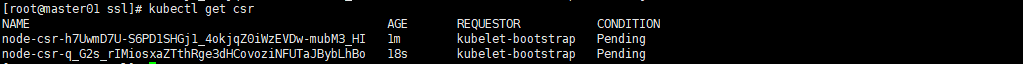

$ systemctl status kubelet.service- 查看csr请求 注意是在master01上执行

$ kubectl get csr

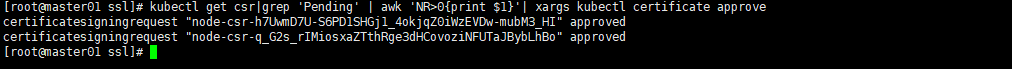

- 批准kubelet 的 TLS 证书请求

$ kubectl get csr|grep 'Pending' | awk 'NR>0{print $1}'| xargs kubectl certificate approve

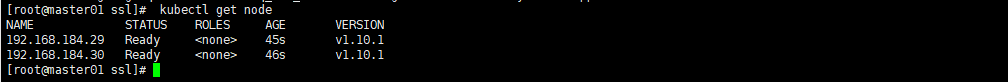

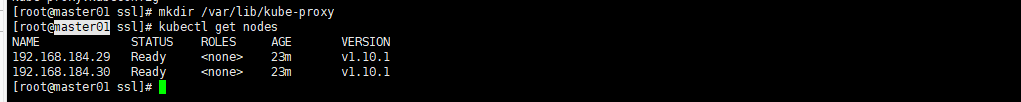

- 执行完毕后,查看节点状态如果是Ready的状态就说明一切正常

$ kubectl get node

部署Kubernetes Proxy (work01和work02节点操作)

- 配置kube-proxy使用LVS

$ yum install -y ipvsadm ipset conntrack- 创建 kube-proxy 证书请求 (master01操作)

$ cd /etc/etcd/ssl_tmp

$ cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF- 生成证书,并分发至节点.

$ cfssl gencert -ca=/etc/etcd/ssl/ca.pem -ca-key=/etc/etcd/ssl/ca-key.pem -config=/etc/etcd/ssl/ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

$ cp kube-proxy*.pem ../ssl/

$ scp kube-proxy*.pem 192.168.184.29:/etc/etcd/ssl/

$ scp kube-proxy*.pem 192.168.184.30:/etc/etcd/ssl/- 创建kube-proxy配置文件

$ kubectl config set-cluster kubernetes --certificate-authority=/etc/etcd/ssl/ca.pem --embed-certs=true --server=https://192.168.184.28:6443 --kubeconfig=kube-proxy.kubeconfig

$ kubectl config set-credentials kube-proxy --client-certificate=/etc/etcd/ssl/kube-proxy.pem --client-key=/etc/etcd/ssl/kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

$ kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

$ kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

$ scp kube-proxy.kubeconfig 192.168.184.29:/etc/etcd/cfg/

$ scp kube-proxy.kubeconfig 192.168.184.30:/etc/etcd/cfg/

$ mv kube-proxy.kubeconfig /etc/etcd/cfg/- 创建kube-proxy服务配置 (work01和work02节点操作)

$ mkdir /var/lib/kube-proxy //192.168.184.29 和 192.168.184.30

$ cat > /usr/lib/systemd/system/kube-proxy.service <<EOF

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \

--bind-address=192.168.184.29 \

--hostname-override=192.168.184.29 \

--kubeconfig=/etc/etcd/cfg/kube-proxy.kubeconfig \

--masquerade-all \

--feature-gates=SupportIPVSProxyMode=true \

--proxy-mode=ipvs \

--ipvs-min-sync-period=5s \

--ipvs-sync-period=5s \

--ipvs-scheduler=rr \

--logtostderr=true \

--v=2 \

--logtostderr=false \

--log-dir=/etc/etcd/log

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

$ //注意修改ip

$ systemctl enable kube-proxy && systemctl start kube-proxy

$ systemctl status kube-proxy

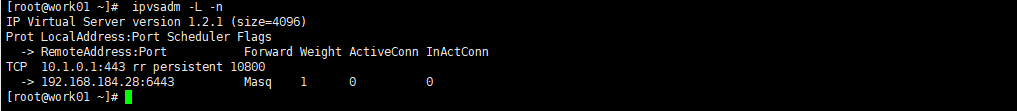

$ ipvsadm -L -n- 检查LVS状态

$ ipvsadm -L -n

- 检查状态:(master01操作)

$ kubectl get nodes

-

Flannel网络部署

- Flannel生成证书

$ cd /etc/etcd/ssl_tmp/ $ cat > /etc/etcd/ssl_tmp/flanneld-csr.json <<EOF { "CN": "flanneld", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "BeiJing", "L": "BeiJing", "O": "k8s", "OU": "System" } ] } EOF - 生成证书和分发证书

$ cfssl gencert -ca=/etc/etcd/ssl/ca.pem -ca-key=/etc/etcd/ssl/ca-key.pem -config=/etc/etcd/ssl/ca-config.json -profile=kubernetes flanneld-csr.json | cfssljson -bare flanneld

$ cp flanneld*.pem /etc/etcd/ssl/

$ scp /etc/etcd/ssl/flanneld*.pem 192.168.184.29:/etc/etcd/ssl/

$ scp /etc/etcd/ssl/flanneld*.pem 192.168.184.30:/etc/etcd/ssl/- 下载Flannel软件包

$ cd && wget https://github.com/coreos/flannel/releases/download/v0.10.0/flannel-v0.10.0-linux-amd64.tar.gz

$ tar zxf flannel-v0.10.0-linux-amd64.tar.gz

$ cp flanneld mk-docker-opts.sh /usr/local/bin/

$ scp flanneld mk-docker-opts.sh 192.168.184.29:/usr/local/bin/

$ scp flanneld mk-docker-opts.sh 192.168.184.30:/usr/local/bin/- 分发对应脚本到/usr/local/bin目录下

$ cd kubernetes/cluster/centos/node/bin/

$ cp remove-docker0.sh /usr/local/bin/

$ scp remove-docker0.sh 192.168.184.29:/usr/local/bin/

$ scp remove-docker0.sh 192.168.184.30:/usr/local/bin/- 配置Flannel

$ cat > /etc/etcd/cfg/flannel <<EOF

FLANNEL_ETCD="-etcd-endpoints=https://192.168.184.28:2379,https://192.168.184.29:2379,https://192.168.184.30:2379"

FLANNEL_ETCD_KEY="-etcd-prefix=/etc/etcd/network"

FLANNEL_ETCD_CAFILE="--etcd-cafile=/etc/etcd/ssl/ca.pem"

FLANNEL_ETCD_CERTFILE="--etcd-certfile=/etc/etcd/ssl/flanneld.pem"

FLANNEL_ETCD_KEYFILE="--etcd-keyfile=/etc/etcd/ssl/flanneld-key.pem"

EOF

$ scp /etc/etcd/cfg/flannel 192.168.184.29:/etc/etcd/cfg/

$ scp /etc/etcd/cfg/flannel 192.168.184.30:/etc/etcd/cfg/- 设置Flannel系统服务

$ vim /usr/lib/systemd/system/flannel.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network.target

Before=docker.service

[Service]

EnvironmentFile=-/etc/etcd/cfg/flannel

ExecStartPre=/usr/local/bin/remove-docker0.sh

ExecStart=/usr/local/bin/flanneld ${FLANNEL_ETCD} ${FLANNEL_ETCD_KEY} ${FLANNEL_ETCD_CAFILE} ${FLANNEL_ETCD_CERTFILE} ${FLANNEL_ETCD_KEYFILE}

ExecStartPost=/usr/local/bin/mk-docker-opts.sh -d /run/flannel/docker

Type=notify

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

$ scp /usr/lib/systemd/system/flannel.service 192.168.184.29:/usr/lib/systemd/system/

$ scp /usr/lib/systemd/system/flannel.service 192.168.184.30:/usr/lib/systemd/system/- 在master节点创建Etcd的key

$ etcdctl --ca-file /etc/etcd/ssl/ca.pem --cert-file /etc/etcd/ssl/flanneld.pem --key-file /etc/etcd/ssl/flanneld-key.pem --no-sync -C https://192.168.184.28:2379,https://192.168.184.29:2379,https://192.168.184.30:2379 set /etc/etcd/network/config '{ "Network": "10.2.0.0/16","SubnetLen": 24, "Backend": { "Type": "vxlan", "VNI": 1 }}'- 启动

$ systemctl daemon-reload

$ systemctl enable flannel && systemctl start flannel

$ systemctl status flannel配置Docker使用Flannel

在Unit段中的After后面添加flannel.service参数,在Wants下面添加Requires=flannel.service.

[Service]段中Type后面添加EnvironmentFile=-/run/flannel/docker段,在ExecStart后面添加$DOCKER_OPTS参数.

$ vim /usr/lib/systemd/system/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service flannel.service

Wants=network-online.target

Requires=flannel.service

[Service]

Type=notify

EnvironmentFile=-/run/flannel/docker

ExecStart=/usr/bin/dockerd $DOCKER_OPTS

...- 将配置分发到另外两个节点中

$ rsync -av /usr/lib/systemd/system/docker.service 192.168.184.29:/usr/lib/systemd/system/docker.service

$ rsync -av /usr/lib/systemd/system/docker.service 192.168.184.30:/usr/lib/systemd/system/docker.service- 192.168.184.28 ,192.168.184.29,192.168.184.30重启Docker服务

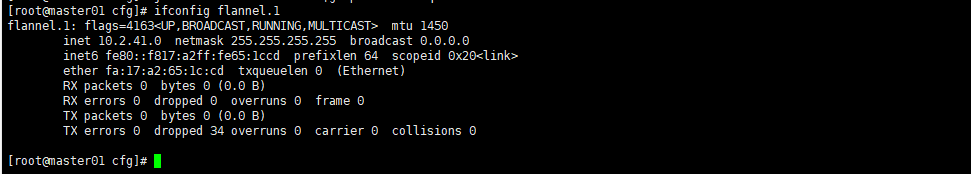

$ systemctl daemon-reload && systemctl restart docker- 检查 flanneld 服务

$ journalctl -u flanneld |grep 'Lease acquired'

$ ifconfig flannel.1

至此flannel网络配置完成,k8s的集群也部署完成,下面我们来建立pod测试集群之间网络的连通性.创建第一个K8S应用,测试集群节点是否通信

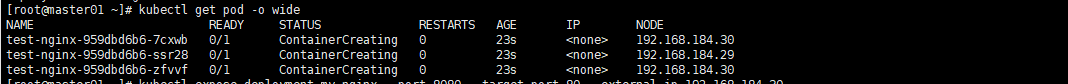

- 创建一个测试用的nginx pod.

$ kubectl run test-nginx --image=nginx --replicas=3 --port=80- 查看获取IP情况

$ kubectl get pod -o wide

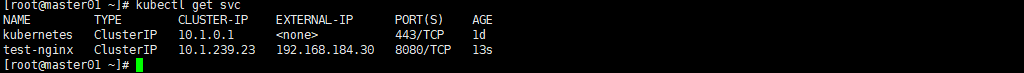

- 暴露服务,创建service.

$ kubectl expose deployment test-nginx --port=8080 --target-port=80 --external-ip=192.168.184.30- 查看service明细。

$ kubectl get svc

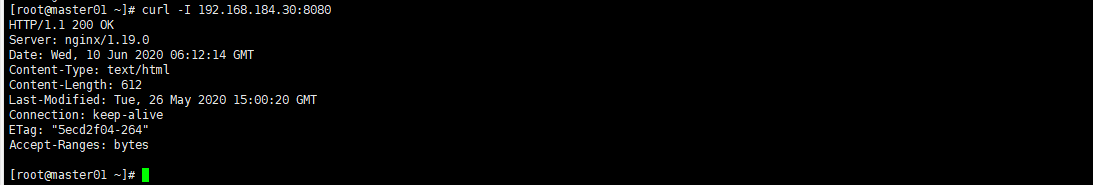

- 测试svc访问-就大功告成了

$ curl -I 192.168.184.30:8080

kubectl常用命令使用

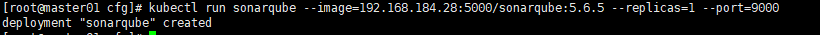

- kubectl run 运行一个镜像

$ kubectl run sonarqube --image=192.168.184.28:5000/sonarqube:5.6.5 --replicas=1 --port=9000

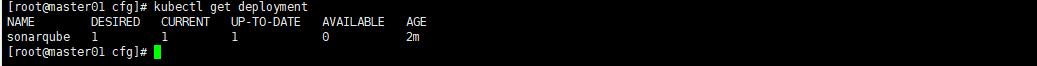

- 确认Deployment

$ kubectl get deployment

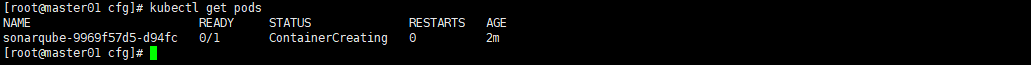

- 确认pod

$ kubectl get pods

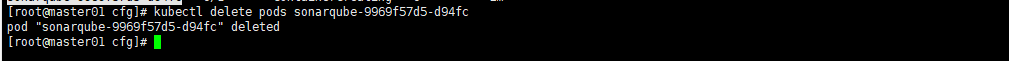

- 删除pods

$ kubectl delete pods sonarqube-9969f57d5-d94fc

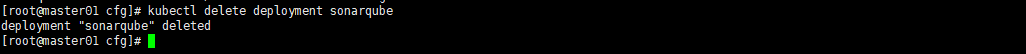

- 删除deployment

$ kubectl delete deployment sonarqube

本作品采用《CC 协议》,转载必须注明作者和本文链接

关于 LearnKu

关于 LearnKu

推荐文章: